R2D2 as a model for AI collaboration

Imagine this: you have a text editor, and your team is there too. Your colleagues are making suggestions, answering questions, filling in gaps, and being sounding boards. But one of the team is an AI.

Matt Webb wrote this post yesterday about Ben Hammersley’s new startup, and in it he articulates a perspective on collaborative AI I’ve been thinking about for a while, and I’m excited to see more people engaging in this conversation.

In thinking about human-machine collaboration, I find myself repeatedly returning to a talk I originally gave at Eyeo in 2016, which used C3PO, Iron Man, and R2D2 as three frameworks for how to design for AI, so I took Matt’s post as a nudge to write up those ideas and update them for the current moment.

Here’s the original video of the talk, which I’ve translated into text below if you prefer to read:

This essay is about the way we design relationships between humans and machines — and not just how we design interactions with them (though that is a part of it), but more broadly, what are the postures we have towards machine intelligences in our lives and what are their postures toward us? What do we want those relationships to look and feel like?

As I’ve been thinking through these ideas, science fiction characters are one of the lenses I’ve been finding helpful as a way of thinking about different models for our relationships with machines. Specifically, I’ve been using these three — C3PO, Iron Man, & R2D2 — as notable archetypes that describe different approaches to how we might design machine intelligences to engage with humans.

C3PO

C3PO is a protocol droid; he’s supposedly designed to not only emulate human social interaction, but to do so in a highly skilled way such that he can negotiate and communicate across many languages and cultures. But he’s really bad at it. He’s annoying to most of the characters around him, he’s argumentative, he’s a know-it-all, he doesn’t understand basic human motivations, and often misinterprets social cues. In many ways, C3PO is the perfect encapsulation of the popular fantasy of what a robot should be and the common failures inherent in that model.

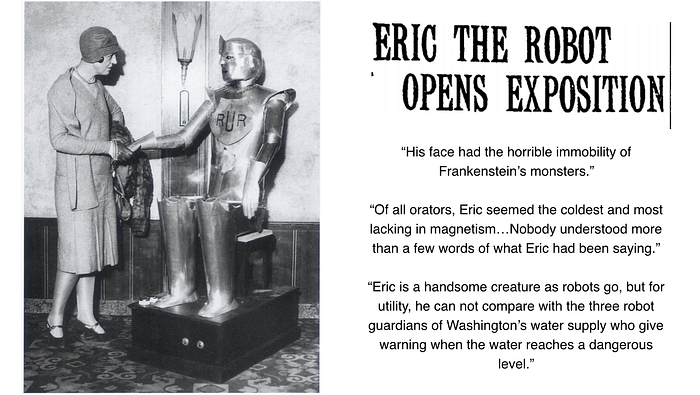

Now this idea didn’t emerge in 1977. The idea of a mechanical person, a humanoid robot, has been around since at least the 1920s. Eric the Robot, or “Eric Robot” as he was more commonly known, was one of the earliest humanoid robots. He was pretty rudimentary, as you can see from the kind of critique that was leveled at Eric by The New York Times in the above quotes — it critiques his social demeanor and also questions his utility — why is a robot that moves and converses better that the one that can tell when the water levels in Washington are too high? Is this the best form or use for a robot?

But despite these criticisms, people were fascinated by Eric, as well as by the other early robots of his ilk, because it was the first moment that there was this idea, this promise, that machines might be able to walk among us and be animate. We’ve had this idea in our social imagination for nearly a century now—the assumption that, given sophisticated enough technology, we would eventually create robotic beings that would converse and interact with us just like human beings.

Our technology today is many orders of magnitude more sophisticated than Eric’s creators could have imagined. And we are still trying to fulfill this promise of a humanoid robot. We see the state of the art today with robots like Sophia.

This is so much closer to that humanoid ideal than Eric was, but it falls so short and just feels awkward and creepy.

On the software side (“bots” vs. “robots”), we have examples of the failure of the C3PO model in the voice assistants we use every day. They’ve gotten pretty “good”, but still can’t understand context well enough to respond appropriately in a consistent way, and the interactions are far from satisfying. It turns out that even with incredibly rich computational and machine learning resources, interacting like a human is really hard. It’s hard to program a computer to do that in any satisfying way because in many ways it’s hard for humans to do successfully either. We get it wrong so much of the time.

Interacting like a human is hard (even for humans).

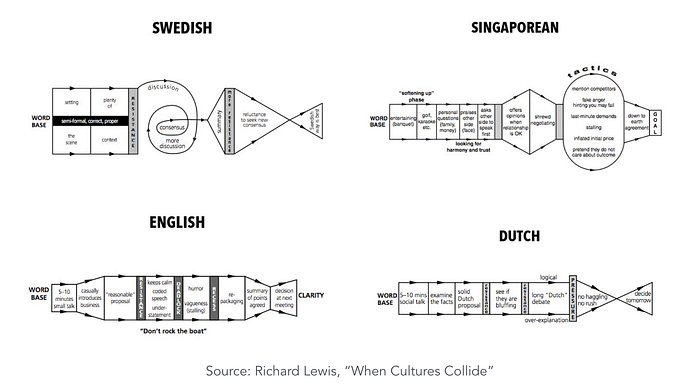

We don’t all talk to each other the same way. We don’t all have the same set of cultural backgrounds or conversational expectations. Below are charts created by British linguist Richard Lewis to show the conversational process of negotiating a deal in different cultures.

These challenges have come to the fore lately with everyone from search engines to banks trying to create convincing conversational interfaces. We see companies struggling with the limitations of this approach. In some cases they have addressed those challenges by hiring humans to either support the bots or in some cases actually pose as chatbots.

Welcome to 2020, where humans pretend to be bots who are in turn pretending to be human.

Stop trying to make machines be like people

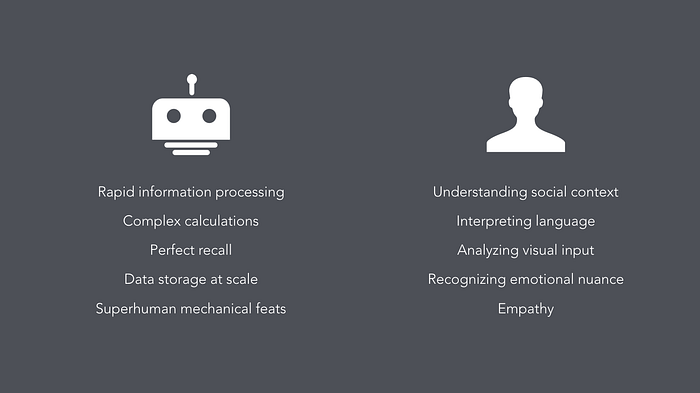

Obviously, the ideal of creating machines that interact with us just like people do is incredibly complicated and may actually be unattainable. But more importantly, this isn’t actually a compelling goal for how we should be implementing computational intelligence in our lives. We need to stop trying to make machines be like people and find some more interesting constructs for how to think about these entities.

The reason people started with the C3PO model is because human conversation is the dominant metaphor we have for interacting and engaging with others. My hypothesis is that this whole anthropomorphic model for robots is fundamentally just a skeuomorph because we haven’t developed new constructs for machine intelligence yet, in the same way that desks and file drawer metaphors were the first attempts at digital file systems, and when TV first emerged, people read radio scripts in front of cameras.

Instead, a more compelling approach would be to exploit the unique affordances of machines. We already have people. Humans are already quite good at doing human things. Machines are good at doing different things. So why don’t we design for what they’re good at, what their unique abilities are and how those abilities can enhance our lives?

Iron Man

Iron Man (as a metaphor) is an example of using the capabilities of machines to provide us with functional enhancements. You’re taking human abilities and augmenting them with mechanical abilities — superhuman strength, superhuman speed, and in the case of Jarvis, augmented information and intelligence. All the technology here is supporting Tony Stark, giving him superpowers.

This idea of machines augmenting our own abilities isn’t just utility though. It exists on a spectrum representing the distance between the person and the technology. And as the distance between the person and technology increases, you start to get deeper into questions of relationships and communication.

Machine as prosthesis

Let’s start at one end: The idea of the machine as a prosthesis — something that is wired directly into our bodies or our brains that can perform with us. Some of those are prosthetics in the traditional sense, where they’ve been developed as assistive devices to adapt for a disability, like cochlear implants, or prosthetic limbs to replace missing limbs. What’s interesting here is there starts to be a blurry line between replacing a missing limb and adding new capabilities that biological limbs don’t have — someone with a prosthetic hand who has a USB drive in his index finger, or runners who have prosthetics designed to run more efficiently than a biological foot. The line between adaptation and augmentation gets very fuzzy.

It’s fascinating to watch how prosthetic technologies often begin as assistive, as adaptations for disability, and then get repurposed (and remarketed) as augmentation. For example, we see hearing aid technologies being repositioned in products like the Nuheara and Olive earbuds, which are designed for people who don’t have hearing loss, but who want to hear in different ways, to manipulate and enhance their environmental soundscape.

Machine as servant

Now there’s a lot of fascinating work being done in that realm of prosthesis and human augmentation, but to get closer to thinking about relational dynamics and design, we need to have more space and explicit communication between person and machine. And we start to get that a little bit as we move down the spectrum, into this construct of a machine as a servant or a butler. We see this with automotive tech like assistive parking and driving, with Slack bots that automate actions in our workflows, or with voice assistants like Alexa, Siri, and Google.

And in these examples, there starts to be a relational dynamic, but it’s pretty limited to these master / servant roles because in these cases, the machine still has no agency. It is there for delegation, to follow explicit commands in a reliable and consistent way.

Machine as collaborator

But I think things get really interesting, both functionally and aesthetically, when we get to the end of the spectrum where the machine is not only separate from the self but also has agency — it has ways of learning and rubrics for making its own decisions. Here we start to get into the question of what it means for machines to be not just servants, but collaborators — entities that work with us to contribute their own unique form of intelligence to a process.

When we’re talking about machines that have agency of some sort, we’re working with entities over which we don’t have complete control and that opens up the possibility of many different kinds of relationships with these entities. They could be collaborators, but they could also be antagonists, friends, bureaucrats, pets, and more.

R2D2

This is where I look to R2D2, who provides a fascinating construct for how we might imagine our future relationships with robots. R2D2 clearly has agency — he often follows orders from humans, but just as often will disobey orders to pursue some higher priority goal. And R2D2 has his own language. He doesn’t try to emulate human language; he converses in a way that is expressive to humans, but native to his own mechanic processes. He speaks droid. The other characters form relationships with him but it is a completely distinct kind of relationship from the one that they have with other people or the one that Luke has with his X-Wing.

“How does the digital sensor perceive the puppy?”

— Ian Bogost, Alien Phenomenology

Timo Arnall took a stab at exploring this question nearly a decade ago in his wonderful film, Robot Readable World, which starts to give us a sense of how the world looks through the gaze of a number of different computer vision algorithms. As we design interactions with these kinds of machine intelligences, what are their versions of R2D2’s language? What expressions feel native to their processes? What unique insights can we gain from the computational gaze?

Collaborating with machine intelligence means being able to leverage that particular, idiosyncratic way of seeing and incorporate it into creative processes. This is why we universally love the “I trained a neural net on [x] and here’s what it came up with” memes. It has this delightful “almost-but-not-quite-ness” to it that lets us delight in the strangeness of that unfamiliar gaze, but also can help us see hidden patterns and truths in our human artifacts.

The increasing accessibility of tools for working with machine learning means that I’m seeing more examples of artists, writers and others treating the machine as collaborator — working with the computational gaze to create work that is beautiful, funny, and strange. Folks doing interesting work in this arena include Allison Parrish, Janelle Shane, Mario Klingemann, Robin Sloan, and Ross Goodwin. Their processes often involve training neural networks, and then using output that is often strange or surreal as a foundation for the artist to riff and build upon.

In Robin Sloan’s Writing with the Machine project, he described the experience of using it as “like writing with a deranged but very well-read parrot on your shoulder…The world doesn’t need any more dead-eyed robo-text. The animating ideas here are augmentation; partnership; call and response.

This process uses the strange aesthetics of machine processing to create material that a human couldn’t, but that then serves as foundation and inspiration for human creativity.

As we think about the future of collaboration with AI, some questions to consider: How does an intelligent machine with agency communicate with the humans around it? How does it do so in a way that feels in keeping with its process and purpose? And how can people give feedback and negotiate in a way that feels clear and empowering?

Let’s do more carpentry

The above people and projects are just a few examples of work that points the way toward a much more interesting future with our computational compatriots. And we need more of that experimentation. I hope to make and see more things that can explore these nascent and rich spaces of possibility and point in new directions. Let’s not let the future of AI be weird customer service bots and creepy uncanny-valley humanoids. Those are the things people make because they don’t have the new mental models in place yet. They are the skeuomorphs for AI; they are the radio scripts we’re reading into television cameras.

Our future with machines is going to be much weirder and more complicated than that. I think we will have a wide variety of relationships with machine intelligence — a heterogeneity of interactions that parallel but do not mimic those we have with humans. These machines will be alien others that coexist with us — more like companion species, as Donna Haraway frames it, than like unsatisfyingly second-rate humans. But we need to design that future, to experiment and make things that describe what might be possible, and to create the kinds of systems that make our world richer, stranger, and more full of possibility.